Senapati, P., Parida, P. Sci Rep 15, 13,232 (2025). https://doi.org/10.1038/s41598-025-97337-0

Senapati, P., Parida, P. Sci Rep 15, 13,232 (2025). https://doi.org/10.1038/s41598-025-97337-0

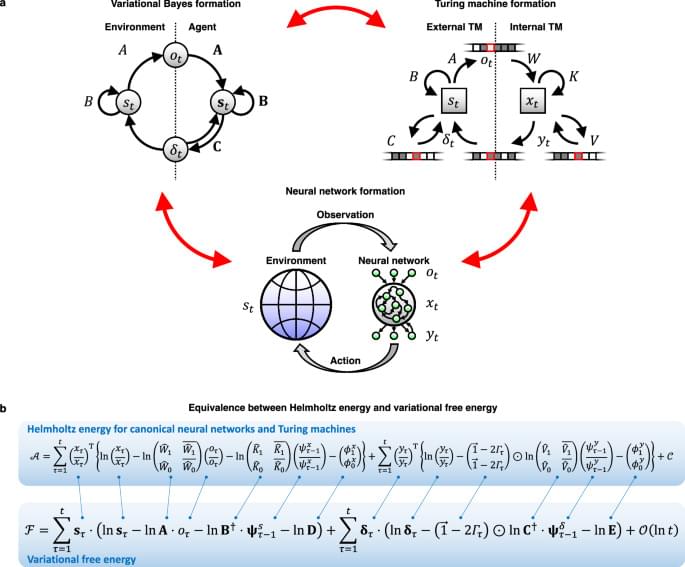

Characterizing the intelligence of biological organisms is challenging yet crucial. This paper demonstrates the capacity of canonical neural networks to autonomously generate diverse intelligent algorithms by leveraging an equivalence between concepts from three areas of cognitive computation: neural network-based dynamical systems, statistical inference, and Turing machines.

This paper introduces an adaptive multi-agent framework to enhance collaborative reasoning in large language models (LLMs). The authors address the challenge of effectively scaling collaboration and reasoning in multi-agent systems (MAS), which is an open question despite recent advances in test-time scaling (TTS) for single-agent performance.

The core methodology revolves around three key contributions:

1. **Dataset Construction:** The authors create a high-quality dataset, M500, comprising 500 multi-agent collaborative reasoning traces. This dataset is generated automatically using an open-source MAS framework (AgentVerse) and a strong reasoning model (DeepSeek-R1). To ensure quality, questions are selected based on difficulty, diversity, and interdisciplinarity. The generation process involves multiple agents with different roles collaborating to solve challenging problems. Data filtering steps are applied to ensure consensus among agents, adherence to specified formats (e.g., using tags like “ and ‘boxed{}‘), and correctness of the final answer. The filtering criteria are based on Consensus Reached, Format Compliance, and Correctness. The data generation is described in Algorithm 1 in the Appendix.

An international collaboration between four scientists from Mainz, Valencia, Madrid, and Zurich has published new research in the Proceedings of the National Academy of Sciences, shedding light on the most significant increase in complexity in the history of life’s evolution on Earth: the origin of the eukaryotic cell.

While the endosymbiotic theory is widely accepted, the billions of years that have passed since the fusion of an archaea and a bacteria have resulted in a lack of evolutionary intermediates in the phylogenetic tree until the emergence of the eukaryotic cell. It is a gap in our knowledge, referred to as the black hole at the heart of biology.

“The new study is a blend of theoretical and observational approaches that quantitatively understands how the genetic architecture of life was transformed to allow such an increase in complexity,” stated Dr. Enrique M. Muro, representative of Johannes Gutenberg University Mainz (JGU) in this project.

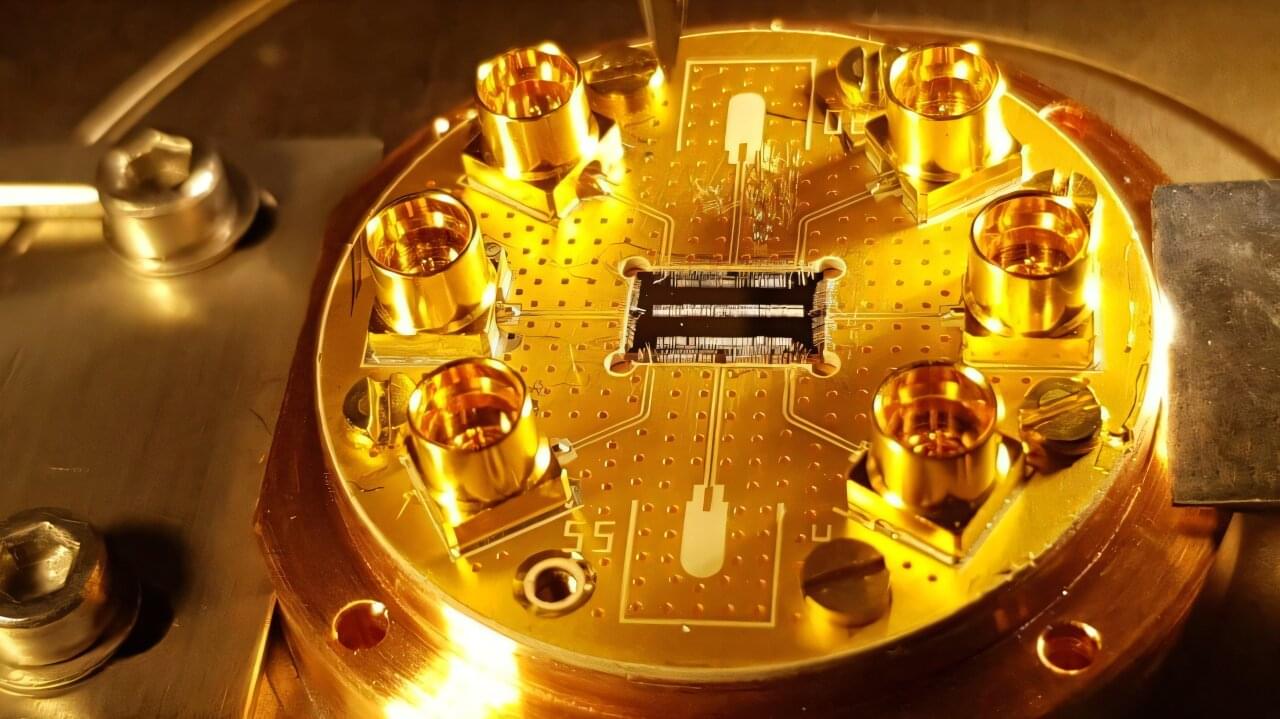

Scientists at EPFL have made a breakthrough in designing arrays of resonators, the basic components that power quantum technologies. This innovation could create smaller, more precise quantum devices.

Qubits, or quantum bits, are mostly known for their role in quantum computing, but they are also used in analog quantum simulation, which uses one well-controlled quantum system to simulate another more complex one. An analog quantum simulator can be more efficient than a digital computer simulation, in the same way that it is simpler to use a wind tunnel to simulate the laws of aerodynamics instead of solving many complicated equations to predict airflow.

Key to both digital quantum computing and analog quantum simulation is the ability to shape the environment with which the qubits are interacting. One tool for doing this effectively is a coupled cavity array (CCA), tiny structures made of multiple microwave cavities arranged in a repeating pattern where each cavity can interact with its neighbors. These systems can give scientists new ways to design and control quantum systems.

This episode is sponsored by Indeed. Stop struggling to get your job post seen on other job sites. Indeed’s Sponsored Jobs help you stand out and hire fast. With Sponsored Jobs your post jumps to the top of the page for your relevant candidates, so you can reach the people you want faster.

Get a $75 Sponsored Job Credit to boost your job’s visibility! Claim your offer now: https://www.indeed.com/EYEONAI

In this episode, renowned AI researcher Pedro Domingos, author of The Master Algorithm, takes us deep into the world of Connectionism—the AI tribe behind neural networks and the deep learning revolution.

From the birth of neural networks in the 1940s to the explosive rise of transformers and ChatGPT, Pedro unpacks the history, breakthroughs, and limitations of connectionist AI. Along the way, he explores how supervised learning continues to quietly power today’s most impressive AI systems—and why reinforcement learning and unsupervised learning are still lagging behind.

We also dive into:

The tribal war between Connectionists and Symbolists.

The surprising origins of Backpropagation.

How transformers redefined machine translation.

Why GANs and generative models exploded (and then faded)

The myth of modern reinforcement learning (DeepSeek, RLHF, etc.)

The danger of AI research narrowing too soon around one dominant approach.

Whether you’re an AI enthusiast, a machine learning practitioner, or just curious about where intelligence is headed, this episode offers a rare deep dive into the ideological foundations of AI—and what’s coming next.

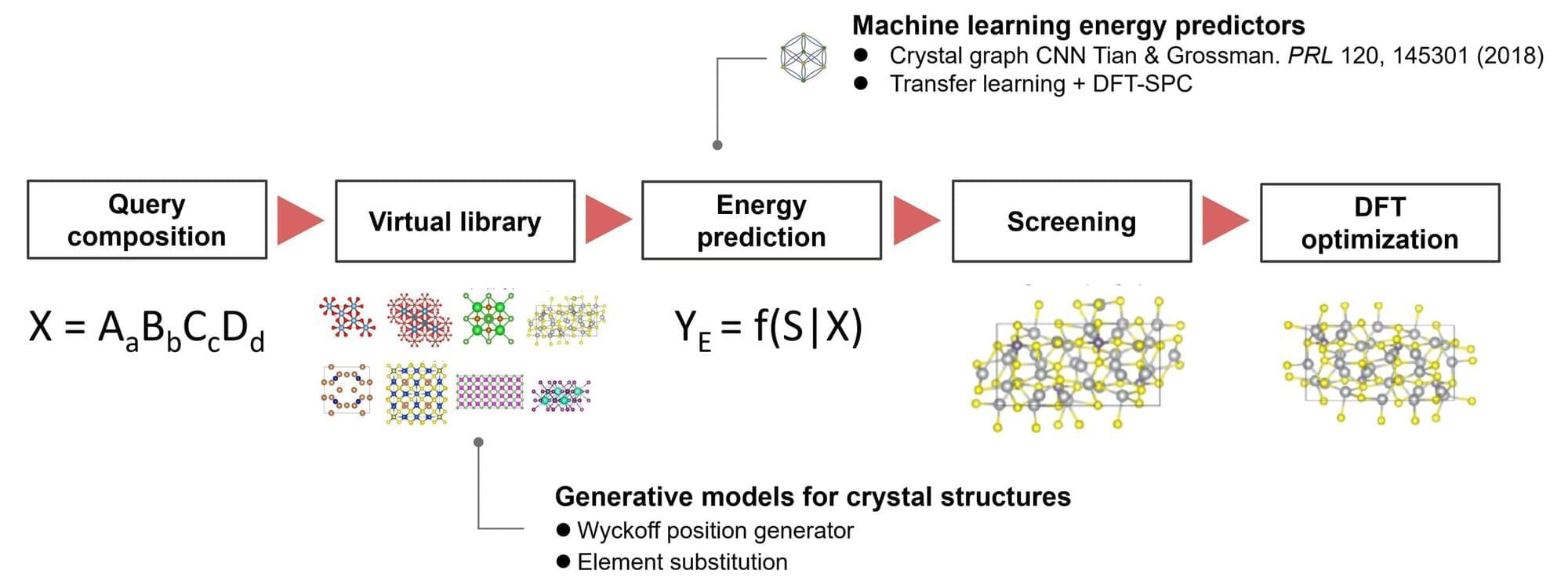

A research team from the Institute of Statistical Mathematics and Panasonic Holdings Corporation has developed a machine learning algorithm, ShotgunCSP, that enables fast and accurate prediction of crystal structures from material compositions. The algorithm achieved world-leading performance in crystal structure prediction benchmarks.

Crystal structure prediction seeks to identify the stable or metastable crystal structures for any given chemical compound adopted under specific conditions. Traditionally, this process relies on iterative energy evaluations using time-consuming first-principles calculations and solving energy minimization problems to find stable atomic configurations. This challenge has been a cornerstone of materials science since the early 20th century.

Recently, advancements in computational technology and generative AI have enabled new approaches in this field. However, for large-scale or complex molecular systems, the exhaustive exploration of vast phase spaces demands enormous computational resources, making it an unresolved issue in materials science.

Learn data science using real world examples on Brilliant! First 30 days are free and 20% off the annual premium subscription when you use our link ➜ https://brilliant.org/sabine.

We still don’t know what “consciousness” actually means. But in a new study, researchers have used the equations of quantum mechanics to determine a brain’s “criticality,” a measure which allows them to separate waking brains from sleeping ones. I think they’re onto something. Let’s take a look.

Paper: https://journals.aps.org/pre/abstract… Check out my new quiz app ➜ http://quizwithit.com/ 💌 Support me on Donorbox ➜ https://donorbox.org/swtg 📝 Transcripts and written news on Substack ➜ https://sciencewtg.substack.com/ 👉 Transcript with links to references on Patreon ➜ / sabine 📩 Free weekly science newsletter ➜ https://sabinehossenfelder.com/newsle… 👂 Audio only podcast ➜ https://open.spotify.com/show/0MkNfXl… 🔗 Join this channel to get access to perks ➜

/ @sabinehossenfelder 🖼️ On instagram ➜

/ sciencewtg #science #sciencenews #consciousness.

🤓 Check out my new quiz app ➜ http://quizwithit.com/

💌 Support me on Donorbox ➜ https://donorbox.org/swtg.

📝 Transcripts and written news on Substack ➜ https://sciencewtg.substack.com/

👉 Transcript with links to references on Patreon ➜ / sabine.

📩 Free weekly science newsletter ➜ https://sabinehossenfelder.com/newsle…

👂 Audio only podcast ➜ https://open.spotify.com/show/0MkNfXl…

🔗 Join this channel to get access to perks ➜

/ @sabinehossenfelder.

🖼️ On instagram ➜ / sciencewtg.

#science #sciencenews #consciousness