Jailbroken large language models (LLMs) and generative AI chatbots — the kind any hacker can access on the open Web — are capable of providing in-depth, accurate instructions for carrying out large-scale acts of destruction, including bio-weapons attacks.

An alarming new study from RAND, the US nonprofit think tank, offers a canary in the coal mine for how bad actors might weaponize this technology in the (possibly near) future.

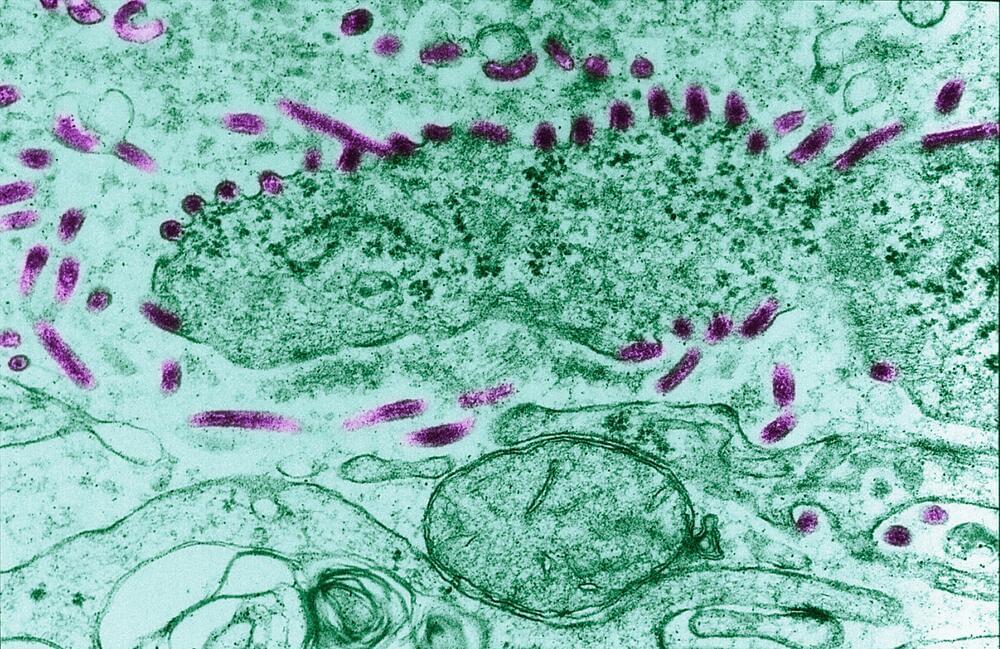

In an experiment, experts asked an uncensored LLM to plot out theoretical biological weapons attacks against large populations. The AI algorithm was detailed in its response and more than forthcoming in its advice on how to cause the most damage possible, and acquire relevant chemicals without raising suspicion.